Is that how you spell "shenannigans?" Oh well.

Received a nifty lil iPod nano this Christmas. An obvious conundrum hit right away... my entire music library is formatted & encoded using Ogg Vorbis. iPods use AAC or MP3's.

Amarok - KDE's killer app - has great iPod support. Of course, the gold standard for iPod support on Linux remains gtkpod - an app that allows your little fingers to get into every facet of your iPod's filesystem layout. But Amarok makes me so tingly inside it's hard to express.

For example, Amarok can transcode your files on-the-fly. Are all your files in Speex but need them in MP3 format for your digital player? Just queue your files in Amarok's media device browser and it will automagically convert your files. Everything in Vorbis but you need AAC? TransKode will take care of it for you.

Not to mention Amarok now has an in-browser music store: Magnatune. I freakin' love Magnatune. The fact that it's now tightly integrated with Amarok makes me giddy.

But there are a few problems. I was up until 1:30 AM this morning trying to get transKode and Amarok to play nicely... there were so many uninstalled dependencies it wasn't even funny. And were there error messages? Nooooooooooooooooo.... I had to go and track down faac, vorbis-tools, gdkpixbuf, blah blah blah. After I made sure every possible package was installed, I was finally able to get Amarok to convert files automagically. I can't blame SuSE for not installing those dependencies... transKode is a plug-in to Amarok after all, and installing every possible dependency for Amarok would suddenly make it heftier than GnuCash.

Even with these limitations, Amarok is suiting my needs much, much better than iTunes. I don't think I'd be able to do jack nor squat with iTunes, considering it can't transcode my library. Next stop is trying to get contacts, calendar, to-do lists and notes to sync. But I've only filled up 10% of my 4GB so far. w00t!

Speaking of software, I'll stop my openSUSE 10.2 after this post. Several may have noticed Hubert Mantel is coming back to SuSE, which I hope is a good omen of things to come. The distribution has slipped since he left, so I'm hoping he can bring things back on course. I must say however - the bug reports I've filed so far have been resolved promptly. They're triaging bugs well. There are some pretty stale ones, like Evolution not running correctly in KDE. But by comparison Ubuntu has had an outstanding bug for what seems like an eternity that's stopping me from switching over: not mounting encrypted partitions at boot time.

openSUSE is going well now... dual-monitor support is working flawlessly now, SCPM is helping me effortlessly switch between home and office, and Amarok is allowing my expansive music collection to survive on my iPod. Hard to complain at this moment.

Wednesday, December 27, 2006

Monday, December 18, 2006

Sloppy SuSE

Have you seen Novell's "improvement" on the KDE menu? Have you seen the quotes around the word "improvement"? I have no idea what they were thinking. Reading the openSUSE site they claim the menu was the brainchild of hundreds of hours of usability study and research, examining every minute detail of user interaction. And yet they came up with a menu that takes 50 clicks to launch an application. Riiiiiight.

Have you seen Novell's "improvement" on the KDE menu? Have you seen the quotes around the word "improvement"? I have no idea what they were thinking. Reading the openSUSE site they claim the menu was the brainchild of hundreds of hours of usability study and research, examining every minute detail of user interaction. And yet they came up with a menu that takes 50 clicks to launch an application. Riiiiiight.I just spent the past three days trying to get dual monitors working with the very insipid ATI card in my Latitude D600. Bear in mind, this isn't the first time I've tried to get a Xinerama-like "bigdesktop" setup working with this machine. Nope. I've had one since I've owned this machine, which is going on two and a half years. And yet, with every OS install on this machine, I've had to wrestle with getting the ATI drivers to obey. It's absolutely ridiculous.

The crazy thing is that we're not even talking about different driver versions. From XFree86 to XOrg, things have behaved weird or wacky in different ways. The only version of the driver I can run is 8.28.8, and even that would be unavailable if it wasn't for K's cluttered loft. 8.29.6 causes the LCD on my laptop to slowly - and I'm not kidding here - burn and bleed pixels. 8.32.5 won't even detect a screen. It's a pretty sorry state of affairs, especially considering 8.28.8 won't even work with XOrg 7.2, which is what SuSE 10.2 ships with. I have to backport to XOrg 7.1, install the 8.28.8 driver, then hack the config file.

Don't even get me started about the xorg.conf file. Even though my version of the driver stays the same, with each SuSE release the file has to change. Release before last I had to tell it my "DesktopSetup" was "0x00000000". Last release I had to ForceMonitors to be "auto,auto" for some unexplained reason (actually specifying the monitors wouldn't work). And in this release I had to specify ForceMonitors to be "lvds,tdms" and explicity specify the HSync2 and VRefresh2 of the monitor in the "Device" section. If I didn't, it would either never turn the monitor on, choose an incorrect frequency or choose a lower resolution.

If the above sounds like absolute rubbish, you're absolutely correct. And SuSE isn't the cause of the problem... it's ATI. Ubuntu, Fedora... they'd have the same problem. It's just that ATI doesn't feel like they need to adhere to "standards" of configuration at all, and their driver is simply unstable and, at times, unusable. The lesson: never, in your life, buy ATI hardware.

Friday, December 15, 2006

Send SuSE Back...

I upgraded my desktop and laptop to openSUSE 10.2 this week. Wow. Bad wow.

I upgraded my desktop and laptop to openSUSE 10.2 this week. Wow. Bad wow.First off, ATI's support of Linux drivers is still abysmal. Not only are their configuration files whack, but they can't be composited, don't support Xinerama (on my laptop at least) and are a pain to install. SuSE ships with a release candidate XOrg 7.2 (because they are really into Xgl and composite extensions), and ATI wasn't ready to support it yet... probably since it hasn't been released. And yet, ATI quickly updated their driver. Still, with my laptop, neither the newer driver nor XOrg 7.2 run. I had to down-port to 7.1, then find an elder driver and use that. Hacked, ugly and it wasted a day and a half of hacking.

NVidia support still isn't much better. When the computer first starts post-install, you get nothing but a blank XWindow. Nice. You actually have to install the NVidia proprietary drivers first, then you can run your window manager. WTF?

As for sound, that was also borked. My laptop did fine with the install, but with my AMD64 desktop sound didn't work at all unless I was root. For some crazy-go-nuts reason, my users weren't in the "sound" group and didn't have permission to access /dev/dsp. So that was fun chasing down.

The Gnome people love messing with my head. Now they've created a keyring manager not unlike KWallet except, just like everything else for Gnome, it's far too convoluted. Evolution uses it to obtain passwords, however OpenSUSE doesn't open the keyring properly prior to launching Evolution. So I have to resort to an ugly, dirty hack for it to work.

So let's sum up here before I rant too long. My desktop wouldn't work immediately after install. Evolution didn't work. My laptop could run on the open-source drivers, but no luck on dual-monitor configs with the proprietary driver. Sound took hacking to work on the desktop. I think we need to let the original SuSE have their distro back - they always polished things to a nice sheen.

On the upside ODE 0.7 is automagically included it seems. So at least I have a standard ODE to develop on / release with. Also Novell has admitted to ZEN sucking - and they've offered alternatives. Although their really horrid ZEN update is still the default. Encrypted partitions work exactly as they should, and NetworkManager works as well.

Hopefully even though openSUSE 10.2 was off to a really rocky start, it will be smooth sailing from here.

Tuesday, December 12, 2006

Trapped In The (Triganometry) Matrix

When everyone in intermediate school asked the math teacher "why the hell should we spend four months on matrix math," he should have looked directly at me and said "because, chuck, you'll need to know how to rotate sprites in both 2D and 3D space, otherwise your code in the future will suck." I would have listened then.

I was finally able to get Qt to properly apply a bitmask to my QWidget, so I can now have a window open that tilts at a 45 degree angle. I've got to start thinking Π/2... ODE works in radians, Qt in degrees.

I was finally able to get Qt to properly apply a bitmask to my QWidget, so I can now have a window open that tilts at a 45 degree angle. I've got to start thinking Π/2... ODE works in radians, Qt in degrees.

There's a number of differences between Qt and ODE that can be a real pain in the butt. ODE's screen coordinate origin is at the bottom-left of the screen (or world)... which I guess kinda makes sense for physics coordinates that want to model themselves after the real world. But Qt uses what everyone else in computer-land uses: an origin at the top-left of the screen. It's whack. That means counter-clockwise rotations in ODE land are clockwise rotations in Qt land. Increasing your values of y send you up in ODE, down in Qt. Normals face one way with ODE, the other way with Qt. Blech.

A big tip o' the hat to Stefan Waner and Steven R. Costenoble for their Finite Mathematics page. Also big thanks to Columbia College of Missouri for reminding me how to reduce a matrix in my head. Without them I wouldn't have been able to remember how to invert the rotation matrix and easily convert ODE rotation matrixes to Qt rotation matrixes.

I was finally able to get Qt to properly apply a bitmask to my QWidget, so I can now have a window open that tilts at a 45 degree angle. I've got to start thinking Π/2... ODE works in radians, Qt in degrees.

I was finally able to get Qt to properly apply a bitmask to my QWidget, so I can now have a window open that tilts at a 45 degree angle. I've got to start thinking Π/2... ODE works in radians, Qt in degrees.There's a number of differences between Qt and ODE that can be a real pain in the butt. ODE's screen coordinate origin is at the bottom-left of the screen (or world)... which I guess kinda makes sense for physics coordinates that want to model themselves after the real world. But Qt uses what everyone else in computer-land uses: an origin at the top-left of the screen. It's whack. That means counter-clockwise rotations in ODE land are clockwise rotations in Qt land. Increasing your values of y send you up in ODE, down in Qt. Normals face one way with ODE, the other way with Qt. Blech.

A big tip o' the hat to Stefan Waner and Steven R. Costenoble for their Finite Mathematics page. Also big thanks to Columbia College of Missouri for reminding me how to reduce a matrix in my head. Without them I wouldn't have been able to remember how to invert the rotation matrix and easily convert ODE rotation matrixes to Qt rotation matrixes.

Friday, December 08, 2006

Computer Science Isn't Science

...it's math.

The inside joke in universities is that if a subject has the word "science" concatenated onto it, it's not really a science. "Social Science" isn't a science. "Computer Science" isn't a science. Physics is. Chemistry is.

I think it's true. What's now "Computer Science" (or in other completely nonsense realms, "Informatics") is really mathematics. I think a lot of modern colleges and universities are getting it completely wrong... don't put CS in with engineering, vocation or *gasp* business. Put it where it belongs.

The knowledge and understanding of algorithms is what separates decent coders from great ones. Nowhere is this demonstrated better than on Beyond3D's Origin of Quake3's Fast InvSqrt(). Here we try to trace back a very elegant, fast and extremely effective five lines of code to its original author. The understanding and anthropology of this Newton-Raphson inverse square codification acts as a veritable who's-who in 3D real-time rendering, from Carmack to Gary Tarolli.

Take a look at "Exceptional lC++", reviewed by the good ol' Register. This is not just a trove of complex but simple C++ snippits - it can be a litmus test for those rare C++ hackers that can change the physical properties of the world with a mere wave of their hand.

For those of us who are still on the intermediate side of the scale, S. Dasgupta, C.H. Papadimitriou, and U.V. Vazirani have been releasing drafts of their textbook, "Algorithms," to the general laudations of the programming populous. It really is a fantastic resource, if for no other reason than to have such a nice reference on-hand on-line.

The inside joke in universities is that if a subject has the word "science" concatenated onto it, it's not really a science. "Social Science" isn't a science. "Computer Science" isn't a science. Physics is. Chemistry is.

I think it's true. What's now "Computer Science" (or in other completely nonsense realms, "Informatics") is really mathematics. I think a lot of modern colleges and universities are getting it completely wrong... don't put CS in with engineering, vocation or *gasp* business. Put it where it belongs.

The knowledge and understanding of algorithms is what separates decent coders from great ones. Nowhere is this demonstrated better than on Beyond3D's Origin of Quake3's Fast InvSqrt(). Here we try to trace back a very elegant, fast and extremely effective five lines of code to its original author. The understanding and anthropology of this Newton-Raphson inverse square codification acts as a veritable who's-who in 3D real-time rendering, from Carmack to Gary Tarolli.

Take a look at "Exceptional lC++", reviewed by the good ol' Register. This is not just a trove of complex but simple C++ snippits - it can be a litmus test for those rare C++ hackers that can change the physical properties of the world with a mere wave of their hand.

For those of us who are still on the intermediate side of the scale, S. Dasgupta, C.H. Papadimitriou, and U.V. Vazirani have been releasing drafts of their textbook, "Algorithms," to the general laudations of the programming populous. It really is a fantastic resource, if for no other reason than to have such a nice reference on-hand on-line.

Friday, December 01, 2006

So You Want To Be An Indie (Console) Developer?

I love Andre' LaMothe. How could you not? I remember how completely... dork-filled I felt when his copy Black Art of 3D Game Programming arrived at my office. Well, not really an office. At the time I was a consultant working for a local home builder, and they had shoved me into a 12x12 room with another consultant doing crapwork with MS Access. I was so incredibly psyched when the book came in, but received odd stares from my fellow outsourced monkey when he saw the uber-dorkcover that emblazoned the tome. It looked more like someone's AD&D ruleset guide than a programming book. I immediately put it back in the box and reserved it for reading in the bathroom and attics.

I love Andre' LaMothe. How could you not? I remember how completely... dork-filled I felt when his copy Black Art of 3D Game Programming arrived at my office. Well, not really an office. At the time I was a consultant working for a local home builder, and they had shoved me into a 12x12 room with another consultant doing crapwork with MS Access. I was so incredibly psyched when the book came in, but received odd stares from my fellow outsourced monkey when he saw the uber-dorkcover that emblazoned the tome. It looked more like someone's AD&D ruleset guide than a programming book. I immediately put it back in the box and reserved it for reading in the bathroom and attics.But since then Andre' has not only cleaned up his book covers but released some interesting products. Not software, as some would expect. No... Andre has gone decidedly hardware, by selling do-it-yourself consoles that hackers and hobbyists alike love dearly. He started with the XGameStation, a kit made for the hardware-centric folk who wanted to know how their console ticked. They could build, from the ground up, their very own console with whatever hardware they liked. His latest release, the Hydra Game Development Kit, is more about how you create software that stays as close to the hardware as humanly possible. The Hydra looks like a fantastic lil' learning tool - it keeps would-be programmers close to the metal.

In a world of fifth-generation languages and programming high-level meta-languages, having to be mindful of registers and opcodes is definitely needed. Java protects you from worrying about memory allocation, but you still need to remember memory allocation. But people forget. This is a nice return-to-your-roots tool that keeps your code-fu sharp. Gamasutra's Q&A with Andre' has a great quote:

You can’t imagine the amount of ownership and excitement someone gets from building a small computer and writing every single line of code that controls EVERYTHING on the system including the raster and TV signal. There is nothing like it.

True dat.

Stop It With The Extra Cores Already!

Game programming is a very monolithic and event driven. I should restate that... it has been very monolithic and event driven. And now with machines like the PS3, there are five bazillion (slower) cores on a CPU die instead of a single, long-pipeline overclocked processor. So if you're going to see any speed bumps in your code, you need to find a way to separate your engine logic out into multiple threads.

This is hard, mainly due to resource contention and scheduling synchronization. Gamasutra has a very down-to-earth and easy to read article of the different multithreading architectures developers are evaluating, along with the pros and cons of each.

To see how someone has actually implemented these architectures, or hybrid versions of them at least, one can look at how Valve has threaded their system. Not only did they have to make their events more atomic, they had to re-order and redesign their entire render pipeline. I still don't quite get how one can do animation in a separate thread (you'd think it would need to be synchronized with shading/rendering/drawing/blah), but the results do seem impressive.

The crazy thing was that they said threads spent 95% of the time reading memory, 5% writing. That's insane. But again, the results speak for themselves.

This is hard, mainly due to resource contention and scheduling synchronization. Gamasutra has a very down-to-earth and easy to read article of the different multithreading architectures developers are evaluating, along with the pros and cons of each.

To see how someone has actually implemented these architectures, or hybrid versions of them at least, one can look at how Valve has threaded their system. Not only did they have to make their events more atomic, they had to re-order and redesign their entire render pipeline. I still don't quite get how one can do animation in a separate thread (you'd think it would need to be synchronized with shading/rendering/drawing/blah), but the results do seem impressive.

The crazy thing was that they said threads spent 95% of the time reading memory, 5% writing. That's insane. But again, the results speak for themselves.

Saturday, November 18, 2006

AMD's Multi-Core Not Just Multiple Cores

Hopefully this will put an end to my incessant postings on this topic. It appears AMD is indeed developing a processor with vector units, and using them in their actual processor pipeline instead of using it just for graphics processing. This means their CPU will be able to crunch straight-line algorithms much easier (like Folding@Home) by virtue of a unit on-die that caters to that type of processing.

Monday, November 06, 2006

Evil Creeping At Your Door

It's odd how concommitant my Free Software / Open Source Software experiences have been this week.

I heard Richard Stallman speak at the local law school recently and it was... interesting. I recorded it so I could quote more exactly, but my MP3 player took this opportunity to corrupt the filesystem and junk my recorded files. Nice.

Basically he thinks proprietary software is criminal. He also picks lots of things out of his hair. He also likes to play the recorder to parrots. He also thinks taxing CD media is a good way to subsidize music. I'm not kidding on any of these.

He spoke of the "tivotization" of software - basically digitially signing one's binaries to ensure they're completely tied to a given hardware platform. Linus doesn't care if his kernel is "tivolized" - he's just happy to have people contributing to the kernel however they see necessary. Stallman has vested a lifelong fight against it. He loves to say "GNU + Leeenux" as opposed to just "Linux" as most are accustomed to. Some people believe this is a minor distinction... that Linus' "Open Source Software" is pretty much the same as "Free Software." Wrong - Stallman believes all software should be tinkerable and modifiable, imminently hackable like your car's carburetor. Making something unmodifiable, like not being able to recompile your Tivo's kernel, is like welding your car's hood shut.

Meanwhile, I'm trying to help push Linux adoption at home by having our office adopt SLES 10. No sooner had I received approval than Novell announced their partnership to Microsoft. The divide between Free Software and Open Source Software was cleaved apart even further - now they're on opposite sides of a huge digital divide.

Some see this as an incarnation of Ghandi's famous quote:

But it's not. It's more like "they ignore you, then they laugh at you, then they fight you, then they build up an ominous intellectual property portfolio, then offer indemnity only if you recognize we're right and you're wrong."

As The Register recently pointed out, Novell has effectively become a licensee of Microsoft intellectual property. Now Microsoft can point to SuSE as their "competition in the market," sue the other Linux distros and steer Novell in whatever direction they wish. Now Microsoft has enormous leverage over SuSE, because now Novell can no longer afford to exit the Microsoft deal. The moment they do, Microsoft can litigate the pants off of Novell. Novell has now acknowledged Linux uses Microsoft intellectual property, because they've effectively been given free reign to use said IP by Microsoft. The moment they exit the covenant with Microsoft, they're screwed. Microsoft can always argue that since SuSE had access to their IP, it has to be embedded in their product offering.

Novell will no doubt say they've just entered into a covenant not to sue with Microsoft, and this just allows open source developers to innovate without worry of litigation. But this just doesn't make any sense; Microsoft is paying Novell. Is Novell giving anything in return? No. What this gives Microsoft is their own "blessed" version of Linux, expansion of its intellectual property dominion, and the ability to insert their own "viral licensing."

Microsoft did this once in 1999, by promoting Linux during their antitrust trials. Their trial started the .com bubble bursting, but greatly increased adoption of Linux. Now they're trying to do the same thing for Europe, pointing to their interoperability with SuSE.

And now I need to go find a new Linux distro.

EDIT: I just had to add this quote from a Computer Business Review Online article, taken from Microsoft's chief executive, Steve Ballmer:

If that's not a threat, I don't know what is.

I heard Richard Stallman speak at the local law school recently and it was... interesting. I recorded it so I could quote more exactly, but my MP3 player took this opportunity to corrupt the filesystem and junk my recorded files. Nice.

Basically he thinks proprietary software is criminal. He also picks lots of things out of his hair. He also likes to play the recorder to parrots. He also thinks taxing CD media is a good way to subsidize music. I'm not kidding on any of these.

He spoke of the "tivotization" of software - basically digitially signing one's binaries to ensure they're completely tied to a given hardware platform. Linus doesn't care if his kernel is "tivolized" - he's just happy to have people contributing to the kernel however they see necessary. Stallman has vested a lifelong fight against it. He loves to say "GNU + Leeenux" as opposed to just "Linux" as most are accustomed to. Some people believe this is a minor distinction... that Linus' "Open Source Software" is pretty much the same as "Free Software." Wrong - Stallman believes all software should be tinkerable and modifiable, imminently hackable like your car's carburetor. Making something unmodifiable, like not being able to recompile your Tivo's kernel, is like welding your car's hood shut.

Meanwhile, I'm trying to help push Linux adoption at home by having our office adopt SLES 10. No sooner had I received approval than Novell announced their partnership to Microsoft. The divide between Free Software and Open Source Software was cleaved apart even further - now they're on opposite sides of a huge digital divide.

Some see this as an incarnation of Ghandi's famous quote:

First they ignore you, then they laugh at you, then they fight you, then you win.

But it's not. It's more like "they ignore you, then they laugh at you, then they fight you, then they build up an ominous intellectual property portfolio, then offer indemnity only if you recognize we're right and you're wrong."

As The Register recently pointed out, Novell has effectively become a licensee of Microsoft intellectual property. Now Microsoft can point to SuSE as their "competition in the market," sue the other Linux distros and steer Novell in whatever direction they wish. Now Microsoft has enormous leverage over SuSE, because now Novell can no longer afford to exit the Microsoft deal. The moment they do, Microsoft can litigate the pants off of Novell. Novell has now acknowledged Linux uses Microsoft intellectual property, because they've effectively been given free reign to use said IP by Microsoft. The moment they exit the covenant with Microsoft, they're screwed. Microsoft can always argue that since SuSE had access to their IP, it has to be embedded in their product offering.

Novell will no doubt say they've just entered into a covenant not to sue with Microsoft, and this just allows open source developers to innovate without worry of litigation. But this just doesn't make any sense; Microsoft is paying Novell. Is Novell giving anything in return? No. What this gives Microsoft is their own "blessed" version of Linux, expansion of its intellectual property dominion, and the ability to insert their own "viral licensing."

Microsoft did this once in 1999, by promoting Linux during their antitrust trials. Their trial started the .com bubble bursting, but greatly increased adoption of Linux. Now they're trying to do the same thing for Europe, pointing to their interoperability with SuSE.

And now I need to go find a new Linux distro.

EDIT: I just had to add this quote from a Computer Business Review Online article, taken from Microsoft's chief executive, Steve Ballmer:

"Novell is actually just a proxy for its customers, and it's only for its customers," [Steve Ballmer] added. "This does not apply to any forms of Linux other than Novell's SUSE Linux. And if people want to have peace and interoperability, they'll look at Novell's SUSE Linux. If they make other choices, they have all of the compliance and intellectual property issues that are associated with that."

If that's not a threat, I don't know what is.

Monday, October 23, 2006

Waxing Rhapsodic

This was a week to remember the good ol' days. The early archives of Computer Gaming World was released to the public, harking its imminent rebranding. Be sure and check out the ads... Apple II, DOS 3.2 required. John Romero released the source for all the Quake maps, allowing us to see the actual art and design of each level before it was BSP-fragged. And my vote for the longest running easter egg goes to Nintendo's Totaka Kazumi.

Sunday, October 22, 2006

Nvidia's CPU

Now that ATI and AMD are one, Nvidia is working on a CPU as well. There are a lot of people saying they're working on a "GPU on a chip," but I seriously doubt it. It seems a lot of people are wagering this will go toward the embedded or low-power market, just like when VIA acquired Cyrix. But to do so would be extremely narrow-minded of ATI and Nvidia, even considering the boom of embedded devices and the increasing horsepower needed by set-top boxes.

I'm much more inclined to think that Nvidia is working with Intel (which would be a huge surprise, considering how they've been fighting with SLI on nForce vs. Intel chipsets) to compete with AMD & ATI working on bringing vector processing units to CPU's.

I incessantly bring this topic up, but I had to mention this since it seems that my predictions are actually going to happen. I don't necessarily think this will cause GPU's-on-CPU's to happen, although it might be a by-product. Instead I think this is going to allow for increased parallelization, faster MMX instructions (or 3DNow! if that's your taste) and a movement of putting the work of those physics accelerator cards back on the CPU die.

We may see a CPU with four cores, each with integer and floating point units then a handful of separate vector processors (a la IBM's cell) along side them. Given how the Cell reportedly absolutely sucks for many types of algorithms, this may give developers the best of both worlds. Rapidly branching and conditional logic can be done on the integer units with branch prediction and short instruction pipes while long, grinding algorithms can go to the vector processor. This has already worked for projects like Folding@Home, and could work for many similar algorithms.

Or AMD/ATI and Nvidia could just go for a stupid, embedded GPU's sitting on die with the CPU. But they'd be passing up something much cooler.

I'm much more inclined to think that Nvidia is working with Intel (which would be a huge surprise, considering how they've been fighting with SLI on nForce vs. Intel chipsets) to compete with AMD & ATI working on bringing vector processing units to CPU's.

I incessantly bring this topic up, but I had to mention this since it seems that my predictions are actually going to happen. I don't necessarily think this will cause GPU's-on-CPU's to happen, although it might be a by-product. Instead I think this is going to allow for increased parallelization, faster MMX instructions (or 3DNow! if that's your taste) and a movement of putting the work of those physics accelerator cards back on the CPU die.

We may see a CPU with four cores, each with integer and floating point units then a handful of separate vector processors (a la IBM's cell) along side them. Given how the Cell reportedly absolutely sucks for many types of algorithms, this may give developers the best of both worlds. Rapidly branching and conditional logic can be done on the integer units with branch prediction and short instruction pipes while long, grinding algorithms can go to the vector processor. This has already worked for projects like Folding@Home, and could work for many similar algorithms.

Or AMD/ATI and Nvidia could just go for a stupid, embedded GPU's sitting on die with the CPU. But they'd be passing up something much cooler.

ODE to Qt

I've been working on a small game with Qt and ODE - both cross-platoform APIs that are GPL friendly. While I'm still trying to decide whether or not I like Qt's cross-licensing, I'm definitely sold on its ease of use and elegance of design. And ODE has been great so far... a lot of trial-and-error and late on the 2D scene, but released with a BSD license which makes it very easy to use.

I've been working on a small game with Qt and ODE - both cross-platoform APIs that are GPL friendly. While I'm still trying to decide whether or not I like Qt's cross-licensing, I'm definitely sold on its ease of use and elegance of design. And ODE has been great so far... a lot of trial-and-error and late on the 2D scene, but released with a BSD license which makes it very easy to use.

Wednesday, October 11, 2006

AI Modelling Clay

Physics sandboxes have let us play with tossing objects around, particle interactions and launching things across the room. In-game character and model generators let users have fun creating caricatures of ourselves for avatars. So why not have AI sandboxes that let us create weird and wacky AI patterns?

linux.com recently turned me on to N.E.R.O., an interesting title from a university class written with the Torque engine and the open-sourced real-time NeuroEvolution of Augmenting Topologies (rtNEAT) library authored by Kenneth Stanley. If nothing else, it shows why AI can be hard and why current AI in FPS' doesn't seem to "get it" all the time.

Think Moster Rancher meets Creatures and you can kinda get an idea of the attraction. It's definitely in a new "sandbox" genre of its own. It's worth at least 15 minutes of your time... and a free download. The tutorials themselves are worth it.

linux.com recently turned me on to N.E.R.O., an interesting title from a university class written with the Torque engine and the open-sourced real-time NeuroEvolution of Augmenting Topologies (rtNEAT) library authored by Kenneth Stanley. If nothing else, it shows why AI can be hard and why current AI in FPS' doesn't seem to "get it" all the time.

Think Moster Rancher meets Creatures and you can kinda get an idea of the attraction. It's definitely in a new "sandbox" genre of its own. It's worth at least 15 minutes of your time... and a free download. The tutorials themselves are worth it.

Sunday, October 08, 2006

To Brew or Not to Brew?

After my recent addition to the family, I've been flogged with writing papers, doing research and trying to emerge as the Prime Minister of Uptime at work (and failing). But I've also been in the midst of a life-affirming decision: to hack my new DS or not to hack it?

Allowing execution of unchecked code isn't as heinous as it was initially - evidentally gone are the days of reflashing one's DS in order to skip the cartridge validation. Now all you needs is one cartridge that passes itself off as "verified" and then allows arbitrary file execution from another source, a second GBA cartridge to act as a flash memory reader, some type of removable flash memory such as MicroSD, and a flash memory interface for one's PC to upload applications to said removable flash device. No more toothpicks wrapped in tinfoil, no more hoping your DS doesn't get bricked. If you're willing to spend yet another $150 you can have a DS that runs homebrew software.

While there are some compelling reasons to run homebrew, I've decided against it. Doubling the price of my DS Lite just isn't worth it, even though the idea of writing software that would run on my DS does give my spine a-tingle. I could argue I'd rather not support cheaters who mod ROM's or warez thieves who distribute them... but that's not necessarily it either. I have this major hangup, where adding too much hardware or doing too many work-arounds because a splinter in my mind. When you have to re-engineer so many things, it becomes too inelegant to be pallatable in my mind.

Now I've seen the DS Lite flash drives, and how they seamlessly merge with the base unit. Truly, a modded DS can be indistinguishable from an unmodded one. But the fact that someone would have to pay double the price for a modded DS is just too much.

That doesn't mean, however, I'd absolutely love to find a way to author my own DS cartridge. If I could find a way to produce a standalone DS cart and upload software to it, I'd be all over that in minutes.

Allowing execution of unchecked code isn't as heinous as it was initially - evidentally gone are the days of reflashing one's DS in order to skip the cartridge validation. Now all you needs is one cartridge that passes itself off as "verified" and then allows arbitrary file execution from another source, a second GBA cartridge to act as a flash memory reader, some type of removable flash memory such as MicroSD, and a flash memory interface for one's PC to upload applications to said removable flash device. No more toothpicks wrapped in tinfoil, no more hoping your DS doesn't get bricked. If you're willing to spend yet another $150 you can have a DS that runs homebrew software.

While there are some compelling reasons to run homebrew, I've decided against it. Doubling the price of my DS Lite just isn't worth it, even though the idea of writing software that would run on my DS does give my spine a-tingle. I could argue I'd rather not support cheaters who mod ROM's or warez thieves who distribute them... but that's not necessarily it either. I have this major hangup, where adding too much hardware or doing too many work-arounds because a splinter in my mind. When you have to re-engineer so many things, it becomes too inelegant to be pallatable in my mind.

Now I've seen the DS Lite flash drives, and how they seamlessly merge with the base unit. Truly, a modded DS can be indistinguishable from an unmodded one. But the fact that someone would have to pay double the price for a modded DS is just too much.

That doesn't mean, however, I'd absolutely love to find a way to author my own DS cartridge. If I could find a way to produce a standalone DS cart and upload software to it, I'd be all over that in minutes.

Tuesday, September 26, 2006

Friday, September 22, 2006

DS Development

Out of sheer, morbid curiosity I decided to see what it would take to actually develop an authentic DS cartridge. As the panelists at GDC's Burning Down the House rant suggest, Nintendo is rather tight-fisted with development tools. The process of obtaining development tools requires Nintendo's explicit approval, and it appears none shall pass without a few cartoonish platformers under your belt.

To a point I can understand it... I just wish Nintendo was more open to third-party development. The open-endedness to a myriad of third-party development is what gives Microsoft and Sony all the additional market exposure. They just have none of the schwag. I guess it's Nintendo trying to reinforce their "brand," but if independent developers are supposed to be the saving grace of the games industry, how are they going to keep Nintendo's platforms afloat?

I looked at the technical details of DS cartridges as well as the DS hardware itself, and it seems like it would be a fun platform to develop on. The problem seems to be in the manufacture of the cartridges, and the on-the-fly dynamic encryption the cartridge uses during communication to the unit.

Yes, there is a mature homebrew community for the DS, but that's not what I'm looking for. Getting people to run an installer is tough enough... but trying to have a multitude of people try something that requires a piece of hardware to replace cartridge headers, a flash memory interface, a screwdriver and paperclip crammed into the right hole at the right time is waaaaaaaaaay too much.

Looking at the current state of console homebrew this don't look to rosy on other platforms, either. The PSP requires downgraded firmware but is a bit easier to work with since it aims to be more of a "portable convergent device" than anything else. The other modern handheld platforms... wait... there are none. Well, there's the GP2X which was pretty much designed to be a conduit for independent development, and I respect that, but... well... eh. It just doesn't have the look or immediate marketability to the public at large.

So the bottom line is there's no real chance at marketable, independent game development on the DS. And while it's easier to deploy an independent title to a PSP, it is only narrowly more so. The PSP does have a removable Memory Stick as well as a Web browser, which gives it the advantage of being able to easily move files from a server to the console. But it's still not to the point where you could legitimately sell your indy title on a web site for $5 a pop.

To a point I can understand it... I just wish Nintendo was more open to third-party development. The open-endedness to a myriad of third-party development is what gives Microsoft and Sony all the additional market exposure. They just have none of the schwag. I guess it's Nintendo trying to reinforce their "brand," but if independent developers are supposed to be the saving grace of the games industry, how are they going to keep Nintendo's platforms afloat?

I looked at the technical details of DS cartridges as well as the DS hardware itself, and it seems like it would be a fun platform to develop on. The problem seems to be in the manufacture of the cartridges, and the on-the-fly dynamic encryption the cartridge uses during communication to the unit.

Yes, there is a mature homebrew community for the DS, but that's not what I'm looking for. Getting people to run an installer is tough enough... but trying to have a multitude of people try something that requires a piece of hardware to replace cartridge headers, a flash memory interface, a screwdriver and paperclip crammed into the right hole at the right time is waaaaaaaaaay too much.

Looking at the current state of console homebrew this don't look to rosy on other platforms, either. The PSP requires downgraded firmware but is a bit easier to work with since it aims to be more of a "portable convergent device" than anything else. The other modern handheld platforms... wait... there are none. Well, there's the GP2X which was pretty much designed to be a conduit for independent development, and I respect that, but... well... eh. It just doesn't have the look or immediate marketability to the public at large.

So the bottom line is there's no real chance at marketable, independent game development on the DS. And while it's easier to deploy an independent title to a PSP, it is only narrowly more so. The PSP does have a removable Memory Stick as well as a Web browser, which gives it the advantage of being able to easily move files from a server to the console. But it's still not to the point where you could legitimately sell your indy title on a web site for $5 a pop.

Sunday, September 17, 2006

DS Craving

For the two weeks, ever since Alon retold his story of giving away ten billion DS Lites, I have had an odd, irrational craving to own one. I have no freakin' clue why.

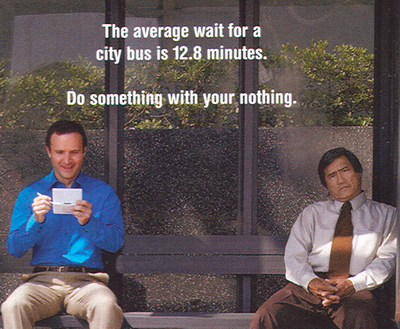

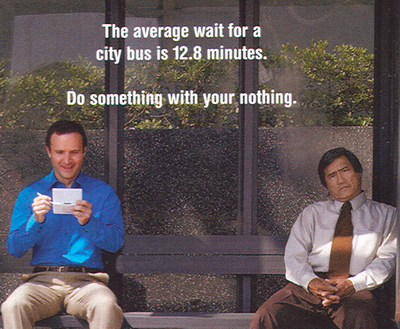

Now that the next round of console wars are coming to a head, I've been watching Wii and PS3 news with tepid anticipation. When I'm at home, I really don't have any free time. But the 12.8 minutes ad must have imbued itself into the part of my brain that rationalizes irrational purchases - because I came to the realization the only console I'd have a chance playing would be a portable one.

Now it's not like I just figured out what a DS is. Or that I was unfamiliar with the titles currently out there. But for some unknown reason a confluence of the form factor, Alon's giveaway and the realization that my time is transient all gave me this unprompted hankerin'. Now all I can think about is how I don't have a DS. I don't even necessarily need one. But dammit... somehow the irrational rationalization neurons are firing in freakin' overdrive.

GAAAAAAAAAAAAAAAHHHHHHHHHHHHH!!!!!!!!!!

I wonder how terrible of a process it is to become an "authorized developer".

Now that the next round of console wars are coming to a head, I've been watching Wii and PS3 news with tepid anticipation. When I'm at home, I really don't have any free time. But the 12.8 minutes ad must have imbued itself into the part of my brain that rationalizes irrational purchases - because I came to the realization the only console I'd have a chance playing would be a portable one.

Now it's not like I just figured out what a DS is. Or that I was unfamiliar with the titles currently out there. But for some unknown reason a confluence of the form factor, Alon's giveaway and the realization that my time is transient all gave me this unprompted hankerin'. Now all I can think about is how I don't have a DS. I don't even necessarily need one. But dammit... somehow the irrational rationalization neurons are firing in freakin' overdrive.

GAAAAAAAAAAAAAAAHHHHHHHHHHHHH!!!!!!!!!!

I wonder how terrible of a process it is to become an "authorized developer".

Sunday, September 10, 2006

Gamer Shame

I just saw this notice posted for a local school about their computer education program:

So... we're saying video games and movies can't be educational?

Now, I totally realize there's a valid point behind what they're saying. Some parents may let their kids log a little too much time in front of World of Warcraft or ye olde boob-tube. But still, this reinforces a stereotype that video games feed the dregs of society.

In the fantastic Gamasutra Podcast, GDC Radio's Tom Kim interviews Computer Gaming World's Jeff Green. It's a great interview about the media portrayal of the gaming industry, and Tom covers a great topic during a couple of instances: gamer shame.

One thing that Jeff brings up several times is that he's excited that Microsoft's new rebranding efforts, including his relaunched magazine, will start pushing gaming more into the mainstream. Jeff mentions numerous times how he's hoping the new marketing initiative will allow gamers not to "feel like they're shopping in the adult DVD rack" of their local outlet. Jeff brings light to the fact that games often feel like they're being judged while buying games... as if they're doing something unseemly.

It's true. I can't place my finger on why, but it's absolutely freakin' true. Do you know how long it took me to come out of the closet about being an indy game developer?

They mention an New York Times article by Seth Schiesel (forgive me if I got the name wrong) where the industry itself has an image problem - and often doesn't project itself necessarily into the mainstream. Instead, the "hard core" gamers are outsiders, but people who watch "Lost" for eighteen consecutive hours are considered considerably mainstream. Everyone is hardcore about something... just some things are less "geekish" than others.

...goals are accomplished by using age and developmentally appropriate software. No video games or movies are used in the enrichment program.

So... we're saying video games and movies can't be educational?

Now, I totally realize there's a valid point behind what they're saying. Some parents may let their kids log a little too much time in front of World of Warcraft or ye olde boob-tube. But still, this reinforces a stereotype that video games feed the dregs of society.

In the fantastic Gamasutra Podcast, GDC Radio's Tom Kim interviews Computer Gaming World's Jeff Green. It's a great interview about the media portrayal of the gaming industry, and Tom covers a great topic during a couple of instances: gamer shame.

One thing that Jeff brings up several times is that he's excited that Microsoft's new rebranding efforts, including his relaunched magazine, will start pushing gaming more into the mainstream. Jeff mentions numerous times how he's hoping the new marketing initiative will allow gamers not to "feel like they're shopping in the adult DVD rack" of their local outlet. Jeff brings light to the fact that games often feel like they're being judged while buying games... as if they're doing something unseemly.

It's true. I can't place my finger on why, but it's absolutely freakin' true. Do you know how long it took me to come out of the closet about being an indy game developer?

They mention an New York Times article by Seth Schiesel (forgive me if I got the name wrong) where the industry itself has an image problem - and often doesn't project itself necessarily into the mainstream. Instead, the "hard core" gamers are outsiders, but people who watch "Lost" for eighteen consecutive hours are considered considerably mainstream. Everyone is hardcore about something... just some things are less "geekish" than others.

Saturday, September 09, 2006

Random Product Announcements

Among some recent product announcements that I found interesting:

Ubisoft is evidentally releasing a game based more on social interaction, rather than headshots. This may show that studios may finally be considering a gender more interested in strategy and social engineering than normal maps on chainguns.

Hack a Day got me mildly interested in Chumby. I have no real idea what the hell it is, but it appears to be imminently hackable. It's what attracted me to the Squeezebox, and that's proven to be a pretty sound investment.

One more corny Nintendo Wii catchphrase: the "Wiimake". Wiimake? Get it?!?!? HA!

Thursday, September 07, 2006

Exactly How Many PCI-E Slots Does One Need?

I'm pretty much hammering this subject into the ground, but then again so are component makers.

Recently we saw Ageia's physics accelerator come to market as an add-on expansion card, astride your existing video accelerator and sound card. Next we saw the Killer NIC reviewed by IGN, a "network interface accelerator" which promises to offload the assembly of TCP and UDP packets to take load off the CPU. It's actually an embedded Linux instance, assembling your TCP stack instead of Windows. Windows just sends the Killer NIC raw datagrams, then the Killer NIC does all the work to disassemble the datagrams into bare signals over the wire.

Now there's supposedly an accelerator in the works, the Intia Processor, dedicated to nonplayer character AI acceleration. It would offload AI processing from the CPU and instead have a dedicated API for pathfinding, terrain adaptation and line-of-site detection.

So let's count the possible gaming accelerators here:

Sound (i.e. Sound Blaser X-Fi)

Video (i.e. NVIDIA GeForce)

Video (additional NVIDIA GeForce for SLI)

Physics (Ageia PhysX)

Networking (Killer NIC)

AI (Intia Processor)

So that eats at least six slots, but if you have double-wide video cards, more like eight. Oh yeah, and you'll spend nearly $1,500 on just the above hardware accelerators alone.

This is a cycle that repeats itself in the computer world, tho. Popular software algorithms become API's. API's become baked into hardware. The hardware becomes to inflexible and becomes firmware. The firmware isn't flexible so it goes into software. And on and on we go.

But this hasn't necessarily happened for OpenGL or graphics acceleration. Why? Because there you're not baking an API into silicon, you're using a different type of processing unit altogether to solve a different genre of problems. Multiple vector processing pipelines can streamline linear algorithms much more effectively that complex instruction set CPU's. The same analogy can be made for floating point units when they were introduced along side elder CPU's that could only handle integer math.

Not only that, we're looking at chip makers shy away from raw clock speeds and instead looking at cramming as many CPU cores as possible on a single die. This allows for every CPU stamped out of the factory to be a multiprocessor machine. Once API's become more threadsafe, this crazy specialized hardware will instead just be an API call sent to one of the four idle CPU's on someone's desktop.

I think the eventual result of this crazy hardwareization (feel free to use that one) is that people are going to want to fit their algorithms into one of three processing units: the CPU pool (by "pool" I mean a pool of processors upon which to dump your asymmetric threads), the VPU (vector processing unit) or the FPU. The CPU & FPU have already become as one but as any C/C++ programmer can attest to, the decision to use integer based math as opposed to floating point math still rests heavily on the programmer's mind.

Given people are already compiling & running applications on a GPU that have nothing at all to do with graphics, it's only a matter of time before chipset manufacturers come up with a way to capitalize on the new general purpose processing unit.

Recently we saw Ageia's physics accelerator come to market as an add-on expansion card, astride your existing video accelerator and sound card. Next we saw the Killer NIC reviewed by IGN, a "network interface accelerator" which promises to offload the assembly of TCP and UDP packets to take load off the CPU. It's actually an embedded Linux instance, assembling your TCP stack instead of Windows. Windows just sends the Killer NIC raw datagrams, then the Killer NIC does all the work to disassemble the datagrams into bare signals over the wire.

Now there's supposedly an accelerator in the works, the Intia Processor, dedicated to nonplayer character AI acceleration. It would offload AI processing from the CPU and instead have a dedicated API for pathfinding, terrain adaptation and line-of-site detection.

So let's count the possible gaming accelerators here:

So that eats at least six slots, but if you have double-wide video cards, more like eight. Oh yeah, and you'll spend nearly $1,500 on just the above hardware accelerators alone.

This is a cycle that repeats itself in the computer world, tho. Popular software algorithms become API's. API's become baked into hardware. The hardware becomes to inflexible and becomes firmware. The firmware isn't flexible so it goes into software. And on and on we go.

But this hasn't necessarily happened for OpenGL or graphics acceleration. Why? Because there you're not baking an API into silicon, you're using a different type of processing unit altogether to solve a different genre of problems. Multiple vector processing pipelines can streamline linear algorithms much more effectively that complex instruction set CPU's. The same analogy can be made for floating point units when they were introduced along side elder CPU's that could only handle integer math.

Not only that, we're looking at chip makers shy away from raw clock speeds and instead looking at cramming as many CPU cores as possible on a single die. This allows for every CPU stamped out of the factory to be a multiprocessor machine. Once API's become more threadsafe, this crazy specialized hardware will instead just be an API call sent to one of the four idle CPU's on someone's desktop.

I think the eventual result of this crazy hardwareization (feel free to use that one) is that people are going to want to fit their algorithms into one of three processing units: the CPU pool (by "pool" I mean a pool of processors upon which to dump your asymmetric threads), the VPU (vector processing unit) or the FPU. The CPU & FPU have already become as one but as any C/C++ programmer can attest to, the decision to use integer based math as opposed to floating point math still rests heavily on the programmer's mind.

Given people are already compiling & running applications on a GPU that have nothing at all to do with graphics, it's only a matter of time before chipset manufacturers come up with a way to capitalize on the new general purpose processing unit.

Sunday, September 03, 2006

My Platforms are Still Supported: New Product Announcements

A few interesting product announcements of note. First, it turns out that Assassin's Creed (which I mentioned earlier for its innovative reflection of reality) isn't going to be a PS3 exclusive after all - it also will have a PC version released. Good news for those of us who don't own, nor plan to own, a console to game on. I've lived in HD for about ten years now, man. Thanks anyway.

To any point, this is fantastic to hear. I was psyched about how this title would handle physics, collision detection and character constraints, and I'm looking forward to picking up a copy.

On a somewhat related topic, SPEC has now released SPECviewperf 9 for Linux. I think this is a fairly big deal - the fact that SPEC believes Linux is a platform that can handle actual intense rendering shows a level of confidence in the platform that everyone but NVIDIA has been slow to realize.

To any point, this is fantastic to hear. I was psyched about how this title would handle physics, collision detection and character constraints, and I'm looking forward to picking up a copy.

On a somewhat related topic, SPEC has now released SPECviewperf 9 for Linux. I think this is a fairly big deal - the fact that SPEC believes Linux is a platform that can handle actual intense rendering shows a level of confidence in the platform that everyone but NVIDIA has been slow to realize.

Saturday, August 26, 2006

Rampant Flashbacks

I finally watched Carmack's presentation at QuakeCon. He mostly discussed the fun of hacking on new and differnet platforms, how parallelization is inevitable but is also an extreme engineering chore, and open sourcing older engines.

He brought up an interesting point - he mentioned he was suprised that no one has yet launched a commercial game based on one of his GPL'ed engines. At first I thought how... unique it was for him to want someone to capitalize on something he dedicated his life to then gave away for free. Then I started to wonder why that was. True, everyone took the sourced engines and ported it to every platform under the sun. That's nifty. But it is somewhat surprising that no one has launched a commercial game on an engine that's not only free but definitely tried-and-true in the marketplace.

In the latest GDC Radio podcast about marketing departments versus developers, they mention how modding or using an existing engine to prove a game concept is usually a quick way to demo something and prove a game function. Carmack also talks about that, mentioning how the mod community is very important and can realize all sorts of gameplay without the huge R&D. However, he also mentions that mods are (or at least their total conversion counterparts) are becoming increasingly rare, probably because the emphasis has now shifted to content creation, and without a team of artists it takes exceptionally long to generate good looking content.

It's interesting to sit back and watch the trials of the CrystalSpace community and how it mirrors... or at least proves... the difficulties in independant game development. They recently had their first international developer conference, and it was interesting the topics that were discussed. It seems like only in the past year has the emphasis really been content creation and building working demos. The engine is absolutely fantastic, but now it's the sweat and tears of taking a title the rest of the way. Take a look at the projects page for CrystalSpace and compare that to the number of titles actually completed (or at least still actively maintained). It's tough to get off the ground.

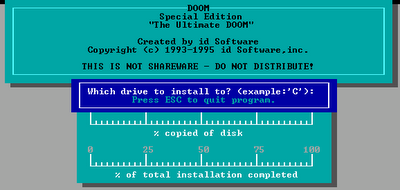

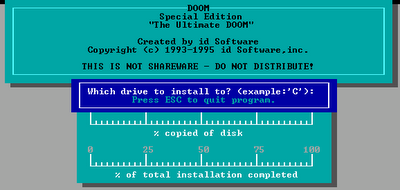

All this talk of the original mod community and Doom made me a bit nostalgic for my roots. For the hell of it I fired up my good ol' Doom installer... and took it for a run.

Seeing that ol' DOS installer brought back a rush of old memories... if I closed my eyes I could hear the hum of my Acer 486/66.

He brought up an interesting point - he mentioned he was suprised that no one has yet launched a commercial game based on one of his GPL'ed engines. At first I thought how... unique it was for him to want someone to capitalize on something he dedicated his life to then gave away for free. Then I started to wonder why that was. True, everyone took the sourced engines and ported it to every platform under the sun. That's nifty. But it is somewhat surprising that no one has launched a commercial game on an engine that's not only free but definitely tried-and-true in the marketplace.

In the latest GDC Radio podcast about marketing departments versus developers, they mention how modding or using an existing engine to prove a game concept is usually a quick way to demo something and prove a game function. Carmack also talks about that, mentioning how the mod community is very important and can realize all sorts of gameplay without the huge R&D. However, he also mentions that mods are (or at least their total conversion counterparts) are becoming increasingly rare, probably because the emphasis has now shifted to content creation, and without a team of artists it takes exceptionally long to generate good looking content.

It's interesting to sit back and watch the trials of the CrystalSpace community and how it mirrors... or at least proves... the difficulties in independant game development. They recently had their first international developer conference, and it was interesting the topics that were discussed. It seems like only in the past year has the emphasis really been content creation and building working demos. The engine is absolutely fantastic, but now it's the sweat and tears of taking a title the rest of the way. Take a look at the projects page for CrystalSpace and compare that to the number of titles actually completed (or at least still actively maintained). It's tough to get off the ground.

All this talk of the original mod community and Doom made me a bit nostalgic for my roots. For the hell of it I fired up my good ol' Doom installer... and took it for a run.

Seeing that ol' DOS installer brought back a rush of old memories... if I closed my eyes I could hear the hum of my Acer 486/66.

Friday, August 25, 2006

GREAT DAY IN THE MORNIN'...

A Penny Arcade eposodic gaming title. I crap you not.

There can be none higher. Everyone, turn in your compilers and heightmaps. The battle has been won. And Gabe and Tycho carry the prize.

There can be none higher. Everyone, turn in your compilers and heightmaps. The battle has been won. And Gabe and Tycho carry the prize.

Thursday, August 24, 2006

Darwinia's Defcon

I was so uber-psyched about Darwinia it was ridiculous. I was hopelessly addicted to Introversion's Uplink, which is still by all measures a great game, I was sure Darwinia was going to be the culmination of my being. Alas, mere weeks after I started playing, I stopped. It just didn't grab my attention enough to warrant stealing away the little free time I have.

Now the press is showing the same ridiculous anticipation towards Defcon (check out the trailers), Introversion's latest brainchild. It seems to keep the same sort of nihilistic layout, UI and score that Uplink had, which is perfect for the isolated, distant war machine they're hoping to portray. But my Darwinia experience keeps me at bay, wondering if they're going to get this one right.

Looks like Uplink with warheads. If that's what it ends up being, this may just be another hit.

Now the press is showing the same ridiculous anticipation towards Defcon (check out the trailers), Introversion's latest brainchild. It seems to keep the same sort of nihilistic layout, UI and score that Uplink had, which is perfect for the isolated, distant war machine they're hoping to portray. But my Darwinia experience keeps me at bay, wondering if they're going to get this one right.

Looks like Uplink with warheads. If that's what it ends up being, this may just be another hit.

A Brief Tangent on Printing

Every so often, there's a weird lil' Windows twitch that I'm called on to support. Here a printer connecting to a Samba print server was taking for-freakin'-ever to spool documents to the remote queue - along the lines of 90 seconds at a time. It wasn't a pause due to an IP resolution or the like... instead Windows was sending massive freakin' amounts of traffic across the line. I'd evesdrop using Ethereal and hear tons of noise... absolute floods of SMB traffic.

I love the Samba listserv posters. I really do. They had traced this back to an issue where Windows XP will open the properties of not just the printer you want to send documents to, but every other printer on the server. Get one bad apple, and you're sent into a backfill of network requests.

Luckily they had found the way to get rid of this brute interrogation by hacking off a few registry keys, which saved the day. But its another minor annoyance that leeched time away until my very being was sucked dry without me noticing.

For those wondering, it was basically just a matter of hacking off the entries in

Back to our regularly scheduled program.

I love the Samba listserv posters. I really do. They had traced this back to an issue where Windows XP will open the properties of not just the printer you want to send documents to, but every other printer on the server. Get one bad apple, and you're sent into a backfill of network requests.

Luckily they had found the way to get rid of this brute interrogation by hacking off a few registry keys, which saved the day. But its another minor annoyance that leeched time away until my very being was sucked dry without me noticing.

For those wondering, it was basically just a matter of hacking off the entries in

HKEY_CURRENT_USER\Printers\DevModePerUser and HKEY_CURRENT_USER\Printers\DevModes2.Back to our regularly scheduled program.

Wednesday, August 23, 2006

Xbox 360 Indie Development?

Rocky pointed me to an interesting announcement (first seen on Gamasutra, then expanded upon later) that Microsoft will be releasing a special "XNA Game Studio Express" - which from what I can gather is a rebranded version of Visual Studio Basic and DirectX bundled together. Their selling point is offering the "XNA Creators Club" for $99 - which sounds something akin to the Microsoft Developers Network (MSDN) for independent game developers.

Now, this would make sense if they allowed you to have an easy-to-use build and test environment for the 360. But instead it appears their way for copying code to the Xbox is uploading all your source and content to the KreatorsKlub, compiling for the 360, then obtaining it via Xbox Live. "Games developed using XNA Game Studio Express cannot be shared through a memory card at this time."

Rebranding Visual Studio, DirectX and an MSDN subscription as the "XNA Game Studio" is kind of a stretch... but hopefully it can lower the entry point of creating a development environment and let people focus on content creation earlier. And as any developer can attest to, establishing a build environment can be excruciating at best.

It is interesting, however, that GarageGames has started promoting its Torque engine for XNA. This can bring a serious producer of indie titles to the 360... and could definitely offer an interesting range of titles (and markets) to Xbox Live. If Microsoft would offer an easier way to test & prototype on the 360, they could see a huge influx of titles, and could easily become the indie hacker's platform of choice.

Now, this would make sense if they allowed you to have an easy-to-use build and test environment for the 360. But instead it appears their way for copying code to the Xbox is uploading all your source and content to the KreatorsKlub, compiling for the 360, then obtaining it via Xbox Live. "Games developed using XNA Game Studio Express cannot be shared through a memory card at this time."

Rebranding Visual Studio, DirectX and an MSDN subscription as the "XNA Game Studio" is kind of a stretch... but hopefully it can lower the entry point of creating a development environment and let people focus on content creation earlier. And as any developer can attest to, establishing a build environment can be excruciating at best.

It is interesting, however, that GarageGames has started promoting its Torque engine for XNA. This can bring a serious producer of indie titles to the 360... and could definitely offer an interesting range of titles (and markets) to Xbox Live. If Microsoft would offer an easier way to test & prototype on the 360, they could see a huge influx of titles, and could easily become the indie hacker's platform of choice.

Saturday, August 19, 2006

Power Equals Work Over Time

Notice anything missing?

Notice anything missing?Our friends at Antec have had my PSU for over a week now. It started making a very high-pitched squeal when running, which confirms that the switching circuits (I think) started to go. So I sent it back for an RMA on Friday, and it arrived at Antec's door on Monday morning.

A replacement unit has finally shipped from California last night... but that means it won't be at my doorstep until Wednesday at best. And since my computer lacks any means to provide power to its wonderous self, I'm left with this suck-ass laptop with an ATI card that displays whatever resolution it damn well feels like, forget it if it's an awful 1024x768 on an LCD monitor that has a native resolution of 1280x1024.

Soo... all work that I need to do on my latest "projects" have slowed to a crawl. Crap.

Wednesday, August 09, 2006

The Enigma of TV Movies

Have you (or your significant other) ever been sitting on the couch, mindlessly watching an edited-for-network-TV movie, replete with commercials, even though you own the same, unedited, high-definition, 5.1 audio movie in your DVD collection? You may even offer to drop said DVD into the strategically placed player, mere inches below above TV, only to be stopped short because "they're just watching it because its on." Because it would be silly to watch it if it wasn't on.

It the very same enigma that means the living room PC will never take off, as so observed by Slate. A media center PC would mean finding the remote, switching to the right input on the set, navigating through a few menus, deciding on an option, then actually hitting play. The alternative is randomly navigating channels until you find a Full House rerun. Whilst you may have a more edifying experience with the former, the cerebellum would rather turn itself completely off and opt for the latter.

It's also the same reason why Internet Explorer owns the majority share of all browser traffic, in spite of its awesome list of well-observed glitches. It's why so many people play solitare on their PC. Hell, it's why Windows won the OS war. It's just on. It's already there, and installing/inserting something different would require the cerebellum to turn back on, and unfortunately 50 hours of mind-numbing tomfoolery at the office has beaten it unconscious.

If one wants to penetrate the vast sea of casual gamers out there, you have to make something easier to aquire than finding a DVD and watching it on the TV. Why are Flash games so popular? Bingo. Easier than a DVD.

Mebbe the next frontier isn't the game per se... it's the installer. Direct into your brain. Or whichever pieces of it remain working.

It the very same enigma that means the living room PC will never take off, as so observed by Slate. A media center PC would mean finding the remote, switching to the right input on the set, navigating through a few menus, deciding on an option, then actually hitting play. The alternative is randomly navigating channels until you find a Full House rerun. Whilst you may have a more edifying experience with the former, the cerebellum would rather turn itself completely off and opt for the latter.

It's also the same reason why Internet Explorer owns the majority share of all browser traffic, in spite of its awesome list of well-observed glitches. It's why so many people play solitare on their PC. Hell, it's why Windows won the OS war. It's just on. It's already there, and installing/inserting something different would require the cerebellum to turn back on, and unfortunately 50 hours of mind-numbing tomfoolery at the office has beaten it unconscious.

If one wants to penetrate the vast sea of casual gamers out there, you have to make something easier to aquire than finding a DVD and watching it on the TV. Why are Flash games so popular? Bingo. Easier than a DVD.

Mebbe the next frontier isn't the game per se... it's the installer. Direct into your brain. Or whichever pieces of it remain working.

Friday, August 04, 2006

Distributed Development

Gamasutra recently released the conclusion to their two-part interview with John Baez, whose company The Behemoth created the 2D title Alien Hominid.

The interview largely focuses on how a small, naive start-up was able to "make it" (albeit still without making a profit) and the lessons they learned. They have even been able to make distributed development work - with two offices, and even individual developers, on opposite sides of the country.

It's an interesting contrast to the current "small companies don't have a chance in hell" talk that is currently floating around. Admittedly, The Behemoth serves a largely niche market, but they're fine with that. And they're still paying salaries. A company has gone alone, taken a title that no one wanted to touch, written it with decentralized developers, and published a title to the PS2, GameCube and 360. They also have merchandising to go along with it, banking on some very original intellectual property.

The interview largely focuses on how a small, naive start-up was able to "make it" (albeit still without making a profit) and the lessons they learned. They have even been able to make distributed development work - with two offices, and even individual developers, on opposite sides of the country.

It's an interesting contrast to the current "small companies don't have a chance in hell" talk that is currently floating around. Admittedly, The Behemoth serves a largely niche market, but they're fine with that. And they're still paying salaries. A company has gone alone, taken a title that no one wanted to touch, written it with decentralized developers, and published a title to the PS2, GameCube and 360. They also have merchandising to go along with it, banking on some very original intellectual property.

12.8 Minutes of Gaming

In the August 2006 Reader's Digest (shut up) I noticed the following ad on the penultimate page...

This ad for the Nintendo DS then goes on to push Brain Age, Nintendogs, Magnetica and True Swing Golf. Brain Age being, of course, the latest rage for casual gamers 30 and over.

It was interesting on several levels: a) this was in freakin' Reader's Digest, b) this was the first overt marketing pitch that I've seen specifically targeting the 30+ crowd, which of course is the demographic that not only is the most plentiful but has the most purchasing power and c) This is obviously Nintendo getting ready to position itself (quite intelligently) not on raw horsepower, but on gameplay and ease-of-use. This is in direct contrast to Sony and Microsoft, and stands to turn decades of console marketing on its ear.

Given how peeps not unlike myself are relegated to just a few spare moments a week, this could be the key to not only courting a new demographic, but changing the types of titles. AAA games will still have their niche, but it's back to being about the gameplay instead of beautifully rendered shrapnel.

This ad for the Nintendo DS then goes on to push Brain Age, Nintendogs, Magnetica and True Swing Golf. Brain Age being, of course, the latest rage for casual gamers 30 and over.

It was interesting on several levels: a) this was in freakin' Reader's Digest, b) this was the first overt marketing pitch that I've seen specifically targeting the 30+ crowd, which of course is the demographic that not only is the most plentiful but has the most purchasing power and c) This is obviously Nintendo getting ready to position itself (quite intelligently) not on raw horsepower, but on gameplay and ease-of-use. This is in direct contrast to Sony and Microsoft, and stands to turn decades of console marketing on its ear.

Given how peeps not unlike myself are relegated to just a few spare moments a week, this could be the key to not only courting a new demographic, but changing the types of titles. AAA games will still have their niche, but it's back to being about the gameplay instead of beautifully rendered shrapnel.

Sunday, July 30, 2006

Everybody Loves a Spore

I've been kinda harping on procedurally and user-generated content as the salvation of the Earth for a while, but I hadn't conceived of how our good friend Will Wright has taken it to the next level. It's beyond procedural content, beyond user-generated content... it's now "pollinated content."

The GDC was where several interesting Will Wright innovations were unveiled... such as the sure-to-be-a-AAA-title USBEmily. But he also presented an early demo of several gameplay prototypes and fortold of thousands upon millions of objects, each procedurally generated and user defined, wrapped up into 3K files and archived by a massive database.

This massive database of every player's creations would rank, sort and weigh each item and make it asynchronously available to every other player's universe. You could then "shop" for creations you like, browse archives and even automatically populate your space with balanced flora and fauna automagically pulled from other users.

This just struck me recently when waxing rhapsodic about Creatures, a game I was way addicted to back in the good ol' college days. There the strength of the game was creature building with learning & adaptive AI, breeding and genetic algorithms. It was quite cool and allowed for much procedurally and user generated content, which players loved to post to fan sites. So you'd browse fan sites, find Norns or DNA strands you liked, download 'em, hack the game, install them, then relaunch. Here, Will is streamlining the entire process: browsing, collecting and installing them is the game.